EDITORS NOTE:

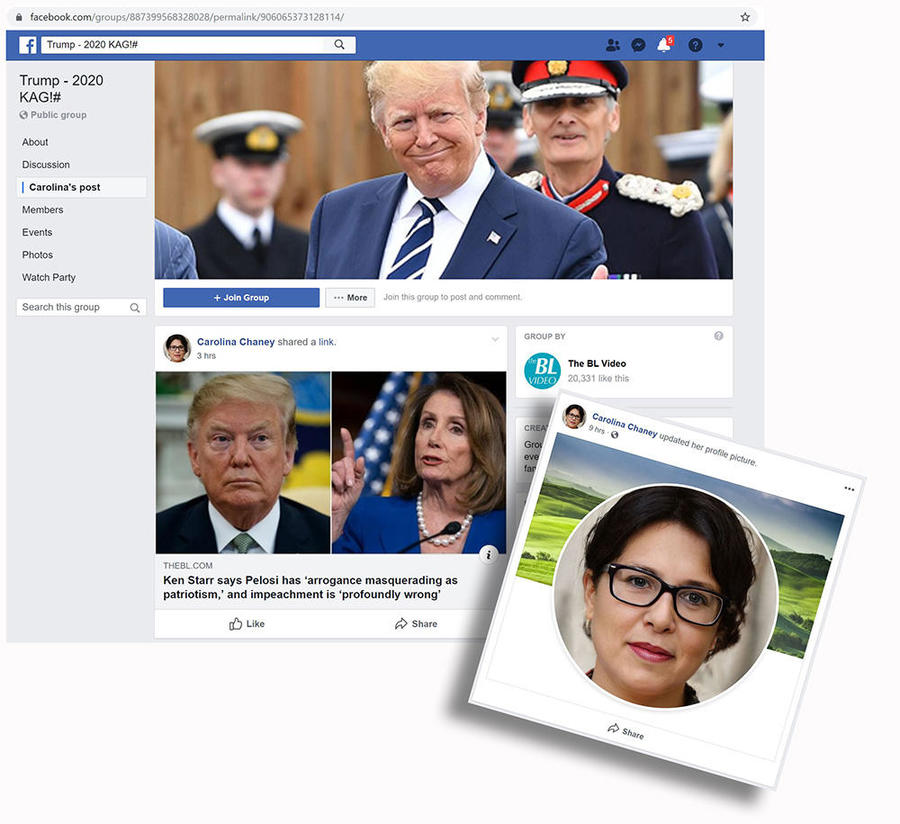

Meet Carolina Chaney. Take a good look at her Facebook profile photo. Maybe she looks like your kid's math teacher or your doctor. Privacy settings block us from seeing anything about her, but we do see she is one of 11 administrators of the "Trump - 2020 KAG!#" Facebook group. Carolina started in that role on Monday, December 9, 2019, and posted two stories on her first day. Those posts suggest that Carolina does not like House Speaker Nancy Pelosi or former Vice President Joe Biden.

Don't try to send Carolina a friend request. She is a person who does not exist. In fact, Carolina's profile image was created by the website ThisPersonDoesNotExist.com.

Sarah Thompson, a very real person and the authenticity analyst for Lead Stories, found Carolina Chaney and hundreds of other non-existent people serving as administrators and members of dozens of Facebook groups that solely promote the content of TheBL.com, a major web publisher. Thompson's previous investigation connected TheBL.com to hundreds of fake Facebook accounts using images of real people taken from other websites, but now she's discovered a new, unreal tactic.

The fake profiles help the publisher distort the popularity of its content, which then makes it more likely to show up on someone's timeline. When it is political content - which most often is what TheBL.com publishes - it is especially concerning.

TheBL is an undeclared offshoot of The Epoch Times and NTD media. NBC Tech News reporters Brandy Zadrozny and Ben Collins wrote about the connections between the Epoch Times and Falun Gong in August 2019. Alex Kasprak and Jordan Liles of Snopes uncovered the links between The Epoch Times and TheBL. I wrote about my own investigation into TheBL network of Facebook pages, groups and profiles for the infosec blog SecJuice.

When I began investigating TheBL network in October 2019, many of their fake Facebook profiles used images taken from the photo-sharing website UnSplash.com, where photographers allow publishers to use their work for free as long as they are credited. TheBL ignored the credit requirement. The images were mostly young, good looking people of many races.

They also used repurposed real accounts, sometimes without even changing the name in the link. The URL for the "Kathy Crum" profile (https://www.facebook.com/thom.cuoi) retained the name of "Thom Cuoi" for example, indicating the repurposed account originally belonged to someone in Vietnam.

Privacy settings often hid the profile history but sometimes not. I could see that just a few weeks earlier the profile had only posted in the Vietnamese language. The fraudulent nature of these profiles is obvious, yet they've been able to game Facebook for months, using false American identities to run more than 200 political pages and groups.

The profile picture "Kathy" used? An UnSplash stock photo by Allef Vinicius:

Photo by Allef Vinicius on Unsplash

Download this free HD photo of accessories, sunglasses, accessory and person by Allef Vinicius (@seteales)

In November 2019 I noticed a change in how TheBL network created new fake profiles. No more UnSplash images. The new faces were headshots which I recognized as having been AI generated, sourced from ThisPersonDoesNotExist.com. Production of new profiles also seems to be speeding up. Most of the 336 synthetic face profiles I found (of which 34 have already been deleted) were created since November 19th. Forty three of them were made on one Saturday - December 7, 2019.

"Carolina Chaney" was created on Monday morning, December 10, 2019 for example. Just hours later, she was amplifying a link to TheBL.com in one of the network's many groups.

So what is ThisPersonDoesNotExist.com?

In February 2019 Twitter was buzzing about the new website from Philip Wang, ThisPersonDoesNotExist.com. Visitors to the site are greeted by a random face on the screen. There's no article or introduction, just a human face. Refresh the page and a new face appears. You can do this all day and never see the same face twice. If you'd rather see cats, there is also ThisCatDoesNotExist.com.

ThisPersonDoesNotExist.com uses AI to generate endless fake faces

The ability of AI to generate fake visuals is not yet mainstream knowledge, but a new website - ThisPersonDoesNotExist.com - offers a quick and persuasive education. The site is the creation of Philip Wang, a software engineer at Uber, and uses research released last year by chip designer Nvidia to create an endless stream of fake portraits.

What makes this so compelling is that these people aren't real. The faces were created by an algorithm named StyleGAN developed by NVIDIA. GAN stands for "generative adversarial network." The adversarial part has to do with two computer systems working together in a competitive way to learn to get better at generating something. In this case, it's making a digital representation of a human face that looks like a photograph. The super-realistic synthetic faces are a high-resolution 1024x1024 pixel square and are copyright free. US Copyright law says that in order to have a copyright, the image must be made by a human.

Be decisive, because if you want to save a picture from ThisPersonDoesNotExist, there's no going back. The face disappears when you refresh the page - replaced by a new image.

Reactions of the real people on Twitter when this came out were wide-ranging, from delight to dread. Many people wondered if they might randomly encounter a computer-generated doppelganger. Others predicted that this would be used by scammers and con artists. Actual artists dreamed of creative things that could be done with such realistic copyright free pictures. Some wondered how long it would take before this delightful innovation was used for some dastardly scheme.

In March 2019, Adam Ghahramani wrote an alarmist piece in VentureBeat about why this technology should be restricted. I wasn't ready to make up my mind. I wanted to know more. The availability of these faces might broaden a criminal's available choices, but there wasn't anything specific a synthetic face offered that wasn't already available with lower-tech options.

More alarm bells rang in June when Raphael Satter's story titled "Experts: Spy used AI-generated face to connect with targets" went out on the Associated Press. Some well-connected people in Washington fell for a LinkedIn profile photo of a beautiful woman with auburn hair and a captivating gaze. She was not real, but a real spy was using the GAN image to lure targets.

How To Detect A GAN Image?

Training myself, part one

I wanted to see if I could learn to spot fake faces generated using the website so that first week I trained with my kids. It felt like playing, but I was studying. We carefully analyzed each image. In this exercise we had a given that 100-percent of the time we knew this was a fake photo. It was our job to pick out quirky clues that confirmed it. Learning the patterns in the hair and the stubble, counting teeth, incisors, canines. We called those iridescent blotches that would appear in some images "beetles." We laughed at the funny hats that were sized to fit the entire hairstyle and not just the head. There were some men who seemed to be wearing a needle-felted toupee, and none of the women had a matching pair of earrings. (Kyle McDonald wrote an excellent guide on these telltale signs.)

Training myself, part two

Enter Carl Bergstrom and Jevin West of Calling Bullshit with their practice tool, WhichFaceIsReal.com. It was just what I was hoping for: a game that presented a real and a fake face next to each other. Now we had a different challenge, we know one of the photos is fake, but which one? Test-taking strategies can come in to play now. Just guessing randomly could give us a score of 50-percent, but now we had the advantage of being able to rule out the synthetic one if we could identify something real in the other photo. With this tool we learned a new set of things that only real people can have in their photos that the computer-generated face pictures don't. Things like logos, zippers, printed patterns, hands, watches, identifiable things in the background... and friends. We kept score. Then I tried for speed, I tried to learn to trust my instincts. I wanted it to become second nature. It wasn't easy.

Hunting for synthetic faces in the wild

I was already formulating some ideas about geometry when I came across an image from Conspirator0 and Dr ZQ that provided the key I needed. They shared this haunting ethereal visage on Twitter:

Roughly a week ago, an AI for generating synthetic faces was made publicly available at thispersondoesnotexist(dot)com. We used it to generate 5000 sample faces. Pictured below is the result of blending all 5000 images.

-- Conspirador Norteño (@conspirator0) February 16, 2019

cc: @ZellaQuixote pic.twitter.com/F2qTqKPSMu

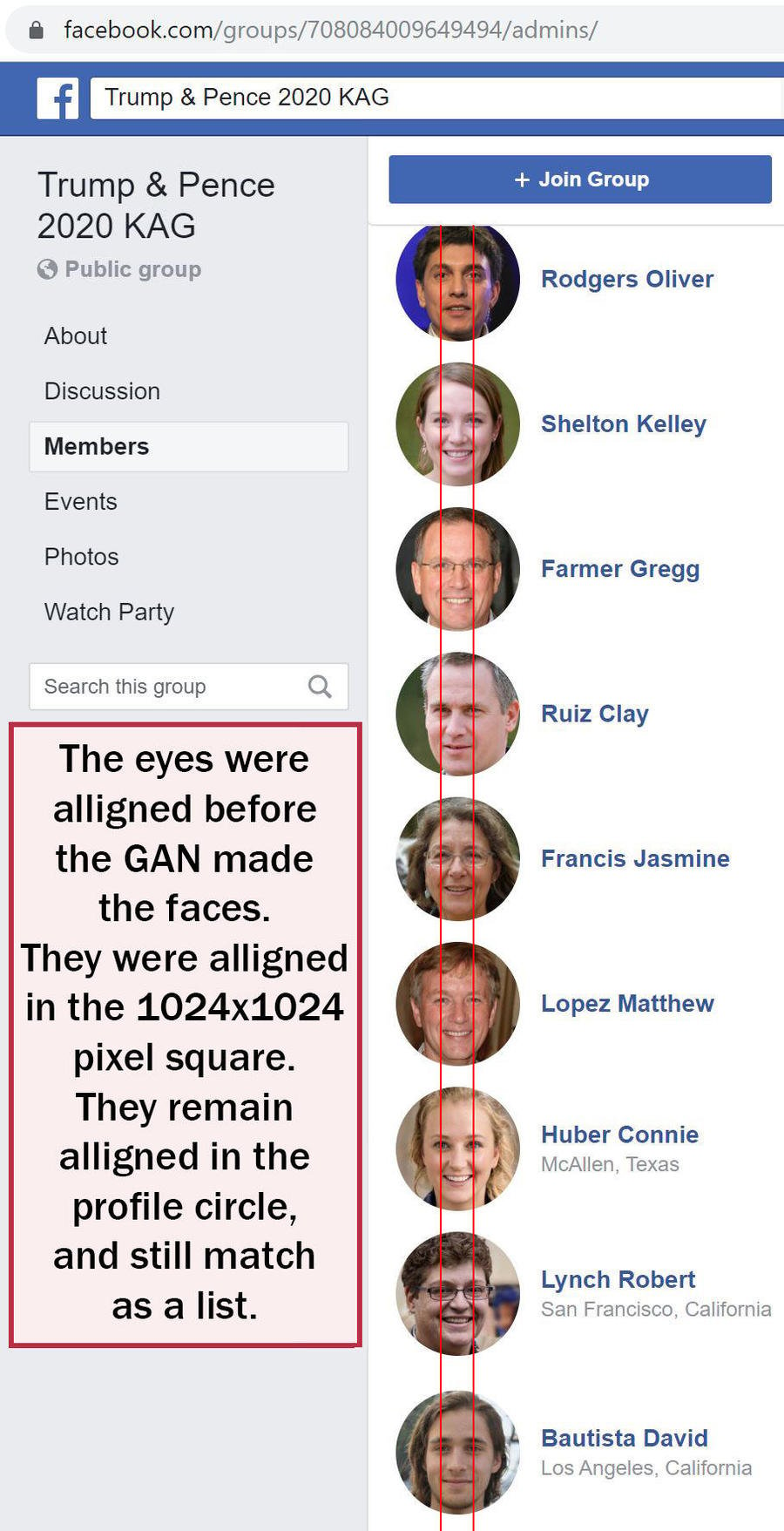

Most of the comments on this thread misinterpreted what they were seeing. They thought this blur was some sort of an average face. It's not. What this otherwise blurry image is depicting are eyes as anchor points. That no matter which way the faces were looking, left, right or center, the eyes were all rendered in the same position in the square. What an important revelation! The sample set of real human faces that were fed into the StyleGAN program had all been positioned, sized and cropped so that their eyes were a constant given position within the 1024x1024 pixel square. The resulting output reflects that underlying data structure. This geometric "rule" is then carried by the synthetic faces in the bounds of that perfect square -- into the bounds of a circular profile photo on Facebook, automatically snapping into place the way circles in squares do.

The graphic below shows eight synthetic profiles which were made by TheBL in November and all have names beginning with S: Smith, Smith, Smith, Shane, Scott, Susan, Sue and Sammy. Notice how the look of the image changes when it is cropped as a circle, but that at the widest and tallest dimensions -- none of the image has been lost. The large central image was made by layering all eight profiles on top of each other. Not the same process and Conspirador0 and Dr ZQ used -- but enough layers to get the idea across.

Of course, this geometry could be defeated by someone who cared to mix it up. There is another website offering stock photo synthetic faces with a neutral background that can easily be made rectangular, unlike the random assortment offered up by ThisPersonDoesNotExist, their website format allows you to browse through a collection. Whoever is churning out the fake profiles for TheBL (it could be automated) is not even matching the fake names to the apparent gender of the synthetic faces.

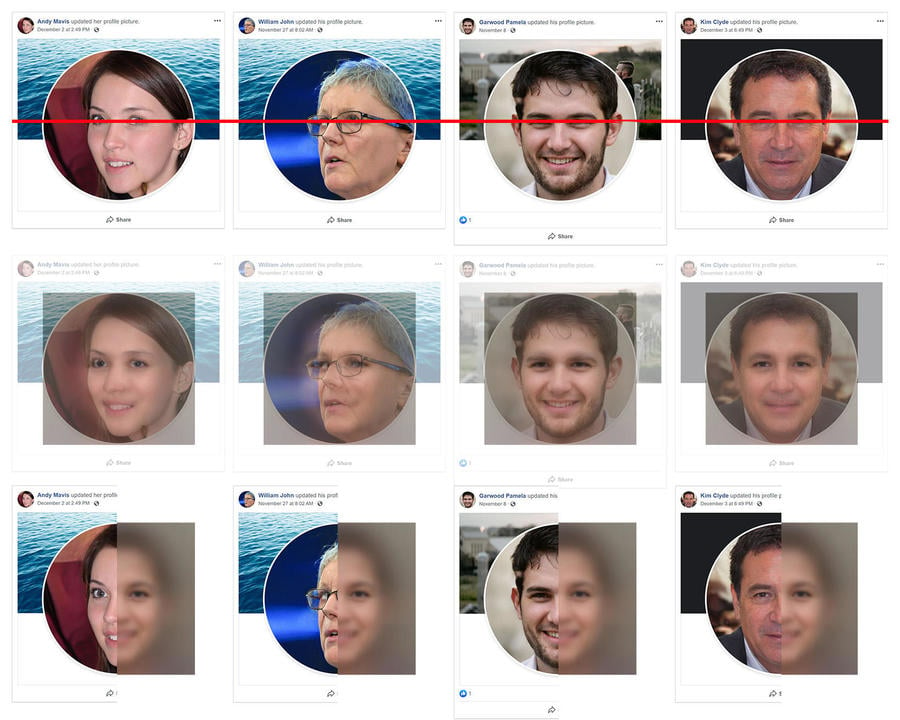

Here is a sample of four of those, Andy, Kim, Pamela and William.

Let's look at them as they appear cropped into a Facebook profile. (Note: Garwood Pamela's rectangular profile box has a slightly different dimension because that picture got one like on Facebook.) In the middle row I put the square of the 5,000 face blend behind a slightly transparent layer of the profiles, and in the bottom row we can compare them side by side.

What does it look like when TheBL installs an entire Facebook group administration team consisting of fake profiles made with synthetic faces?

What does it look like when TheBL installs an entire Facebook group administration team consisting of fake profiles made with synthetic faces?

These synthetic face profiles are just as fake as any of the other BL profiles made with the photos from Unsplash. Inauthentic behavior is inauthentic behavior, no matter how it's dressed up. Some fake profiles never offer a face at all. It could be a bald eagle, a puppy, an American flag, or a bouquet of flowers (they are also doing lots of flowers now.)

Lead Stories has reported all fake profiles we found to Facebook just before publication of this article.

Come back next week as we'll take a closer look at what kind of content these fake people are putting out, and we'll also be examining some of the tools their "operators" seem to be using. Also expect some more information on what could be behind those flowers.

Additional material

- Here you can watch a video about StyleGAN: A Style-Based Generator Architecture for Generative Adversarial Networks

- Here's a good technical article about deep learning: https://www.lyrn.ai/2018/12/26/a-style-based-generator-architecture-for-generative-adversarial-networks/