Did Instagram and Facebook recently change the feed ranking so that no more than 7% of friends and followers can see a users' posts, and does commenting "Yes" on a post trick the algorithm to show more of the page's posts to more people? No, that's not true: Versions of this message have been circulating since at least 2014 and are a play for engagement, called "comment baiting." The way news feed rankings operate is much more complex than tracking a simple comment "Yes," and engagement baiting could even backfire and cause a post to be downranked.

Various forms of this message have been circulating for years. There are versions designed for both Facebook and Instagram audiences. This example mentioning both Instagram and Facebook surfaced recently on Facebook. This post (archived here) was published by Vaxxed Global Movement on March 2, 2021. The text of the meme reads:

THIS IS A TEST

Instagram has been limiting our posts so that no more than 7% of our friends see our posts.

If you see this post, please simply comment with "Yes"and then like it. This way our ranking will improve and Facebook will start showing our posts to more of our friends.

Thank you so much for your help!

This is what the post looked like on Facebook at the time of writing:

(Source: Facebook screenshot taken on Fri Mar 5 14:32:01 2021 UTC)

In early 2019 this rumor was making the rounds on Instagram. On January 22, 2019, Instagram posted a thread on Twitter to clear up the confusion and let people know how the Instagram feed is curated.

We've noticed an uptick in posts about Instagram limiting the reach of your photos to 7% of your followers, and would love to clear this up.

-- Instagram (@instagram) January 22, 2019

The two tweets which follow explain:

What shows up first in your feed is determined by what posts and accounts you engage with the most, as well as other contributing factors such as the timeliness of posts, how often you use Instagram, how many people you follow, etc.

We have not made any recent changes to feed ranking, and we never hide posts from people you're following - if you keep scrolling, you will see them all. Again, your feed is personalized to you and evolves over time based on how you use Instagram.

There are several versions of this direct plea for engagement type of post, with the stated goal of causing the algorithm to show more posts to more people. On February 6, 2019, the Facebook Newsroom published the article, "No, Your News Feed Is Not Limited to Posts From 26 Friends" to address the misleading information going viral in a copy/paste post on Facebook. Ramya Sethuraman, a Facebook product manager who works on ranking, was quoted:

The idea that News Feed only shows you posts from a set number of friends is a myth. The goal of News Feed is to show you the posts that matter to you so that you have an enjoyable experience. If we somehow blocked you from seeing content from everyone but a small set of your friends, odds are you wouldn't return.

In December 2017 Facebook Newsroom published an article about changes that were being implemented to fight engagement bait on Facebook. The article identified several different methods of engagement baiting which would be downranked. These include, vote, react, share, tag and comment baiting. Updates to the article include information about additional languages that the machine learning model could detect (now 23 languages), and a 2019 update informs that it could now detect engagement bait in the audio of a video. The article explains that individual engagement baiting posts would be downranked, or the content of the entire page of those who were habitual offenders:

To help us foster more authentic engagement, teams at Facebook have reviewed and categorized hundreds of thousands of posts to inform a machine learning model that can detect different types of engagement bait. Posts that use this tactic will be shown less in News Feed.

Additionally, over the coming weeks, we will begin implementing stricter demotions for Pages that systematically and repeatedly use engagement bait to artificially gain reach in News Feed. We will roll out this Page-level demotion over the course of several weeks to give publishers time to adapt and avoid inadvertently using engagement bait in their posts. Moving forward, we will continue to find ways to improve and scale our efforts to reduce engagement bait.

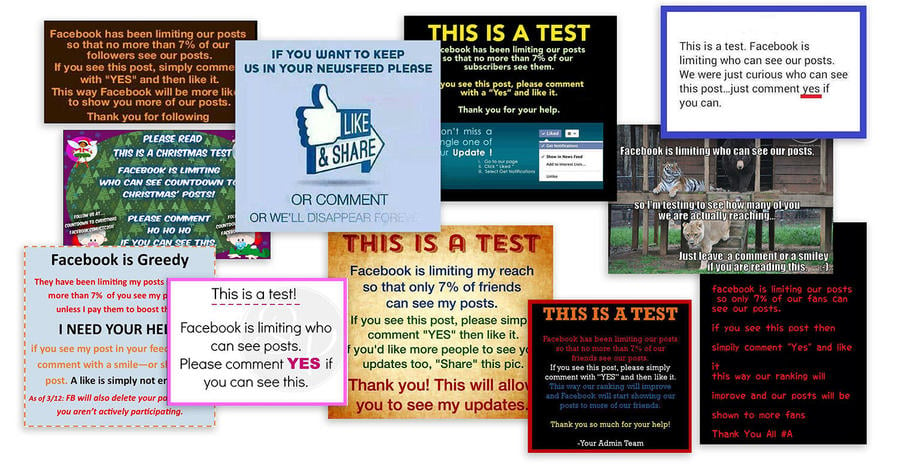

Below is an assortment of posts collected from Facebook which utilize this basic structure to play for engagement.

(Source: Facebook screenshots taken on Tue Mar 9 18:15:01 2021 UTC)

Facebook Engineering published an article on January 26, 2021 which explains some of the things the ranking algorithm takes into consideration when curating the news feeds of over 2 billion people. There are many signals it takes into account and one of them is commenting, but as already explained, artificial engagement from comment baiting such as, "Type Yes," does not register the same way as genuinely engaging content. The ranking algorithm also does not work the same way for everyone; it's personalized. This engineering article is very technical, as the quote below demonstrates, but the video included with the article explains the ranking process in layman's terms:

For some, the score may be higher for likes than for commenting, as some people like to express themselves more through liking than commenting. For simplicity and tractability, we score our predictions together in a linear way, so that Vijt = wijt1Yijt1 + wijt2Yijt2 + ... + wijtkYijtk. Note that this linear formulation has an advantage: Any action a person rarely engages in (for instance, a like prediction that's very close to 0) automatically gets a minimal role in ranking, as Yijtk for that event is very low. To personalize beyond this dimension, we continue researching personalization based on observational data. People with higher correlation gain more value from that specific event, as long as we make this method incremental and control for potential confounding variables.

(Editors' Note: Facebook is a client of Lead Stories, which is a third-party fact checker for the social media platform. On our About page, you will find the following information:

Since February 2019 we have been actively part of Facebook's partnership with third party fact checkers. Under the terms of this partnership we get access to listings of content that has been flagged as potentially false by Facebook's systems or its users and we can decide independently if we want to fact check it or not. In addition to this we can enter our fact checks into a tool provided by Facebook and Facebook then uses our data to help slow down the spread of false information on its platform. Facebook pays us to perform this service for them but they have no say or influence over what we fact check or what our conclusions are, nor do they want to.)