Did Facebook add a new feature that allows users to "shake phone to report a problem" and is this resulting in people accidentally and unknowingly reporting their friend's posts -- which is then causing their friend to be sent to "Facebook Jail," (a colloquial term used to signify that someone's account has been restricted)? No, that's not true: There is a feature on Facebook's mobile app that uses a shake to open a reporting dialogue, but this is only for reporting problems with the functionality of the app, not for reporting problematic content. Furthermore, after opening the dialogue, the report still needs to be manually filled out and sent in. A person can not file a technical report accidentally just by shaking their phone. If someone's account has been restricted, it is not because a friend was shaking their phone.

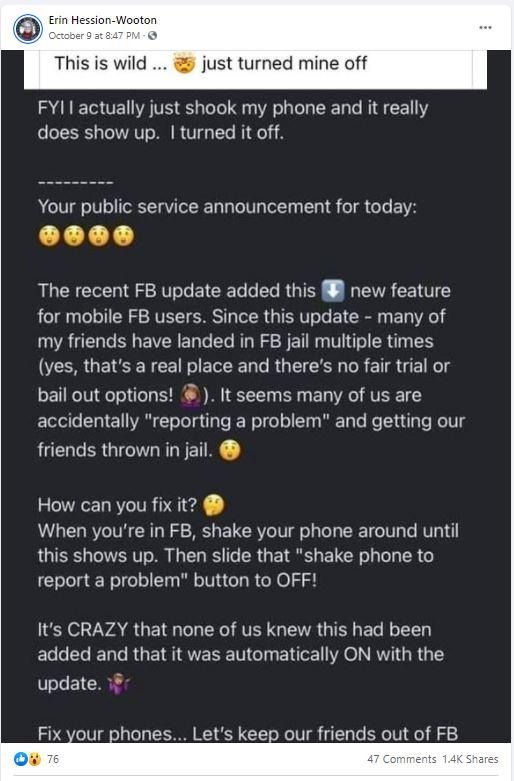

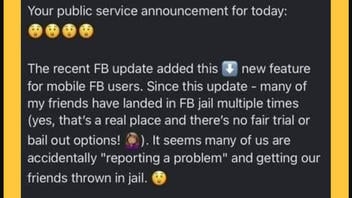

The claims in this post have been circulating on Facebook since at least 2019 and have resurfaced, one example is this post published on Facebook on October 9, 2021. The text in the screenshot reads:

This is wild ... just turned mine off

FYI I actually just shook my phone and it really does show up. I turned it off.Your public service announcement for today:

The recent FB update added this new feature for mobile FB users. Since this update - many of my friends have landed in FB jail multiple times (yes, that's a real place and there's no fair trial or bail out options!). It seems many of us are accidentally "reporting a problem" and getting our friends thrown in jail.

How can you fix it?

When you're in FB, shake your phone around until this shows up. Then slide that "shake phone to report a problem" button to OFF!It's CRAZY that none of us knew this had been added and that it was automatically ON with the update.

Fix your phones... Let's keep our friends out of FB jail!

This is how the post appeared on Facebook at the time of writing:

(Image source: Facebook screenshot taken on Thu Oct 14 17:01:21 2021 UTC)

This claim can be broken down into two parts -- How does the "shake to report" feature work, and why does Facebook place temporary restrictions on some accounts?

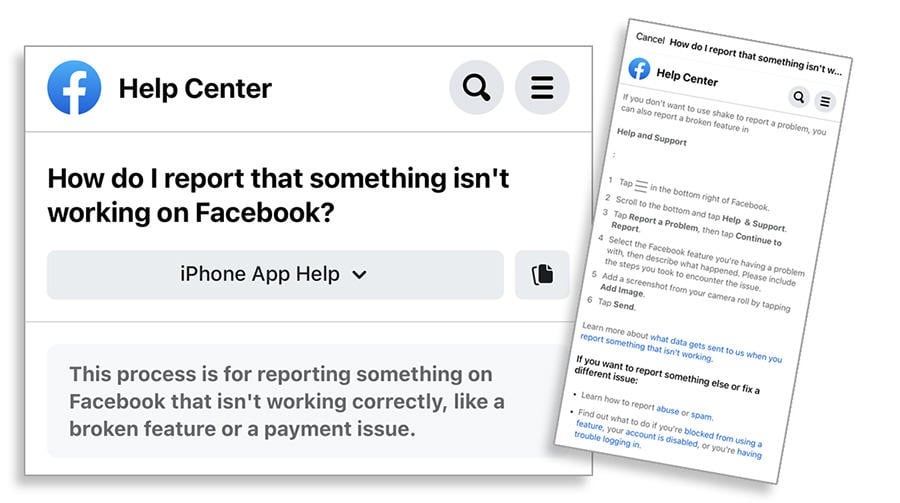

When a person is using the Facebook app and wants to report a functional problem, shaking the phone will open up a reporting dialogue. Shaking the phone does not submit a report. The heading "Shake phone to report a problem" has two options: one is a slide button that can be switched to turn the feature off; the other is "Learn More," which opens Help Center details that describe the six steps required to file a report. When the dialogue opens, it is clear that this feature is for reporting a certain type of technical problem and is not used for reporting abuse or spam. It says:

This process is for reporting something on Facebook that isn't working correctly, like a broken feature or a payment issue.

(Image source: Lead Stories composite of Facebook mobile screenshots taken on Thu Oct 14 15:34:15 2021 UTC)

There is a separate Help Center dialogue to learn how to report abuse. The types of reportable content listed there are: profiles, posts, posts on your timeline, photos and videos, messages, pages, groups, events, comments and ads. An additional section is devoted to special types of reports such as child abuse and human trafficking. These reports are typically made starting from the post which is being reported.

On June 23, 2021, Facebook updated its article about restricting accounts. Facebook users sometimes call account restrictions, "Facebook Jail." This article explains the strike system, where violations of Facebook's Community Standards may incur a warning or restrictions on a person's account for increasing periods of time.

For most violations, your first strike will result in a warning with no further restrictions. If the Facebook company removes additional posts that go against the Facebook Community Standards or Instagram Community Guidelines in the future, we'll apply additional strikes to your account, and you may lose access to some features for set periods of time.

If a report was made accidentally, it would not result in an account restriction unless the post in question also happened to be in violation of community guidelines. Reports from other Facebook users do not automatically result in account restrictions without first being reviewed. Facebook uses both technology and review teams to detect violations. Facebook's technology can detect violations without a report even being filed. According to Facebook, 90% of the content that is removed was found first by technology.

(Editors' Note: Facebook is a client of Lead Stories, which is a third-party fact checker for the social media platform. On our About page, you will find the following information:

Since February 2019 we are actively part of Facebook's partnership with third party fact checkers. Under the terms of this partnership we get access to listings of content that has been flagged as potentially false by Facebook's systems or its users and we can decide independently if we want to fact check it or not. In addition to this we can enter our fact checks into a tool provided by Facebook and Facebook then uses our data to help slow down the spread of false information on its platform. Facebook pays us to perform this service for them but they have no say or influence over what we fact check or what our conclusions are, nor do they want to.)